The conversation about reducing technical debt usually starts in the wrong place.

Most teams treat it as a scheduling problem: When is there a good time to slow down and clean things up? The answer, reliably, is never. There is always a committed roadmap, a stakeholder preparing for a demo, or a feature that “can’t wait.” So the cleanup sprint gets pushed to next quarter, then the one after that, until the team is operating at a fraction of its former velocity and no one can clearly explain why.

The framing is wrong because debt reduction is not a project to be scheduled. It is a cost to be managed continuously, the same way any team manages cycle time or sprint capacity. Teams that make meaningful progress on debt are not the ones who finally found the right quarter. They are the ones who stopped waiting for one.

The most damaging technical debt in a scaling codebase is rarely the kind engineers flag explicitly. It is the accumulated friction that shows up everywhere and gets attributed to nothing in particular. Estimates creep up. Bug fixes take longer than they should. Stories that looked straightforward in planning turn into multi-day investigations. The sprint closes, velocity looks acceptable, and nobody connects the pattern to the underlying cause.

Across every team size and industry, the pattern is consistent: engineers absorb the friction silently, leadership stays unaware, and the PM is making roadmap commitments against capacity that is already partially consumed. The 2024 Stack Overflow Developer Survey found that technical debt is the top frustration at work for professional developers, What that signals is not a morale problem. It is an information gap between the people doing the work and the people setting the priorities.

The reason most teams cannot see the debt that is hurting them is that they are looking for it in the wrong place. They scan for the modules engineers complain about explicitly. The more dangerous debt is the kind that has normalised. Nobody flags it anymore because it has always been there. It shows up only when you measure the delivery signals it produces.

When engineers raise technical debt in planning, they describe it in technical terms. The service is tightly coupled. Test coverage is inadequate. The authentication module needs refactoring before it can safely be extended. These descriptions are accurate. They are also unhelpful for a PM or engineering director trying to make a prioritisation call against committed feature work, because features have business cases and debt has engineering complaints.

The translation layer that is missing is delivery impact. Not what the debt is, but what it is costing the roadmap right now. Until you can express debt in terms that leadership already tracks, it will keep losing to features in every planning conversation. Three measures close that gap.

Sprint tax is the simplest. Track how much of each sprint is consumed by unplanned work: bug fixes that were not in the plan, investigations that did not produce a ticket, rework on things that should have been done the first time. That percentage is your sprint tax. A team running on a clean codebase typically sees unplanned work in the 10 to 15 percent range. Teams carrying significant debt frequently run at 30 to 40 percent without realising it, because the unplanned work is distributed across the sprint in small increments rather than showing up as a single visible cost. Add it up over a quarter and you have a concrete number: this is what debt is costing the roadmap, expressed in terms everyone can read.

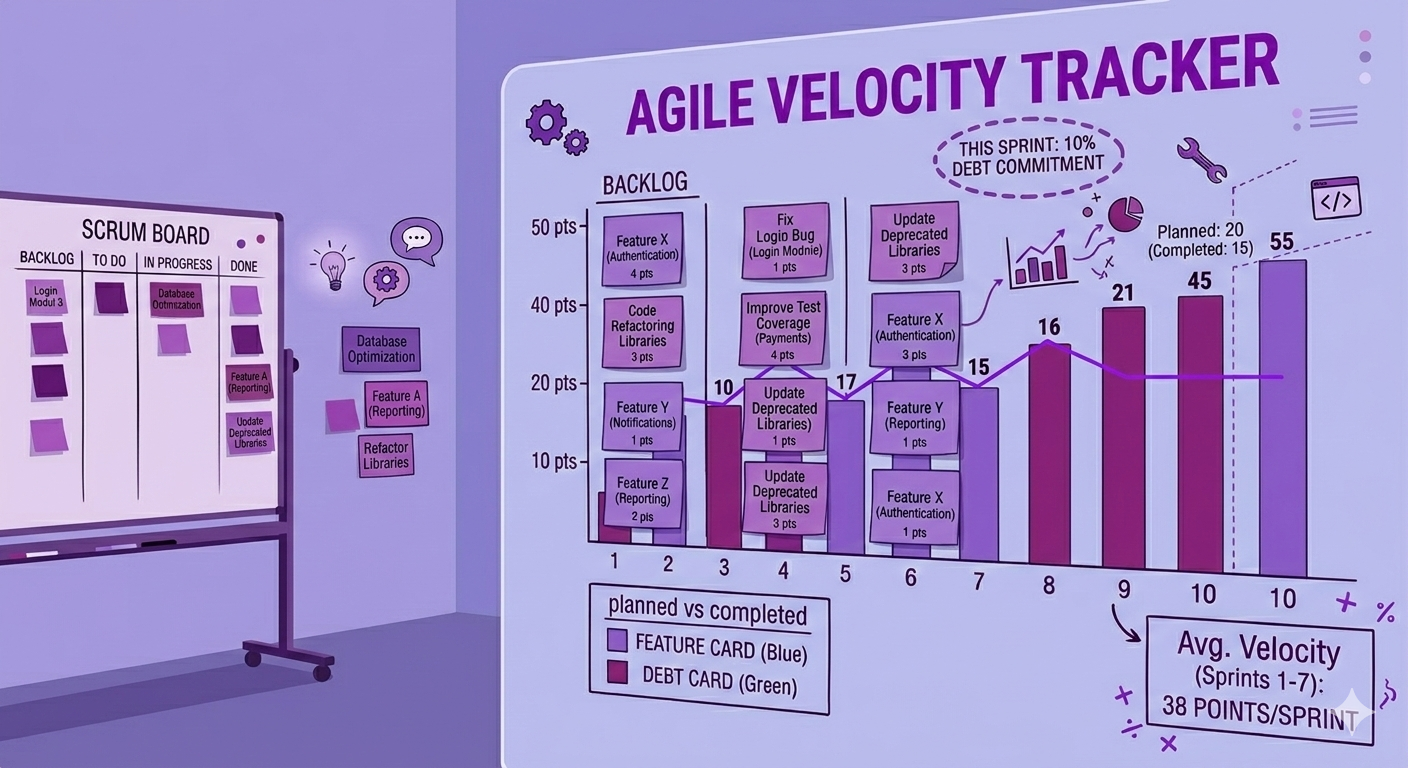

Velocity drag is the trend line. Take your rolling average velocity over the past six to eight sprints and examine the direction. A team holding roughly steady or improving modestly is healthy. A slow downward drift, or high variance that makes planning unreliable, is a debt signal. If there is no clear external cause like team changes or scope inflation, that pattern is almost always the codebase. No single sprint makes it obvious. Across two quarters it represents real roadmap slippage that compounds quietly.

Escalating bug rates in stable modules are the most telling measure. New features introducing bugs are expected. Defects appearing in parts of the system that have not been recently touched indicate that changes in one area are producing unexpected side effects in others. That is the delivery signature of high coupling and low test coverage. Tracking defect origin by module over time reveals where debt is concentrated before it spreads, and it does so in terms that connect directly to delivery risk.

Together these three measures let you enter a planning conversation with specifics rather than symptoms: the team is losing 35% of sprint capacity to unplanned work, velocity has drifted downward over the last two quarters, and the majority of recent bugs are coming from modules on the critical path for next quarter. That is a different conversation than "the engineers say we need a refactor."

Once you can see the debt's delivery impact, the next mistake is treating it as a single undifferentiated problem. Most codebases have debt across dozens of areas. The question is never how do we eliminate all of it. It is which debt is actively hurting delivery now, and which can wait.

A scoring approach makes this tractable. For each area of identified debt, assess it on four dimensions: whether it is on the critical path for near-term roadmap work, its current defect rate, whether work in that area consistently runs over estimate, and whether the fix is scoped and bounded or open-ended. The first three tell you where the debt is costing delivery. The fourth tells you whether you can act on it in the near term without committing to a major architectural change.

The scoring is not precision analysis. It is a prioritisation forcing function. It converts the conversation from "we have debt everywhere" to "here are the three areas where debt is actively costing delivery, and here is the order we would address them." That conversation gets heard in planning. The other one does not.

A 2025 CAST report, based on analysis of more than 10 billion lines of code, put the scale of global technical debt at 61 billion workdays of repair time. The point is not despair. It is that you cannot address all of it, and trying to treat it as a single problem to be eliminated is the surest way to make no progress on any of it. Triage is not a compromise. It is the only approach that works.

The mechanism that works consistently is continuous allocation. Reserve a fixed percentage of sprint capacity for debt reduction and protect it the same way you protect a committed feature. Not whatever is left over after features are done. A named, prioritised allocation that ships regardless of what else is happening.

The right allocation depends on the current debt load and will shift as the highest-impact areas get addressed. The specific number matters less than the consistency. A team that protects a fixed slice of every sprint makes more progress than a team that runs at zero for two months and then attempts a dedicated cleanup sprint that gets cut before it finishes.

What goes into that allocation is determined by the scoring exercise. At the start of each quarter, identify the top debt areas by impact and break the highest-priority refactors into sprint-sized pieces. A bounded refactor that would take three weeks becomes three separate sprint tickets, each with a clear scope and a clear definition of done. Debt that lives in Jira as a real ticket with a real owner and a real definition of done gets done. Debt that lives in a vague epic called "Tech Debt Q3" does not.

The strangler fig pattern is the right approach for large, high-risk modules that cannot be rewritten in a single effort. You build the new implementation alongside the existing one, gradually routing to the new version until the old one can be removed. There is no big-bang cutover and no moment where the team has to pause feature work for a full rewrite. The old code keeps running while the replacement is built incrementally.

Boy scout refactoring is the lowest-overhead approach. The principle is simple: leave the code cleaner than you found it. When an engineer touches a module for a feature or bug fix, they make targeted improvements to the surrounding code as part of that ticket. Not a full refactor. Incremental cleanup. Across a quarter it meaningfully improves the quality of the modules being actively worked, without appearing as a separate sprint cost.

In most debt-heavy codebases, low test coverage is what makes refactoring feel dangerous. Engineers avoid touching certain modules not because the code is hard to understand, but because there is no safety net. A change goes in, something breaks two systems over, and nobody knows why until production. The fix is not always the refactor. Sometimes it is writing the tests first, so the next person who touches the module can actually see what they broke.

For teams running AI coding tools, code review standards need to evolve alongside the tooling. AI-assisted code ships at higher volume and faster than traditional development, which can accelerate debt accumulation if reviewers are not specifically checking for complexity, duplication, and long-term maintainability. A targeted addition to your code review checklist that flags AI-generated patterns worth scrutinising is low cost and high return. The tooling amplifies what the process already enforces. If the process does not catch shortcuts, the tooling makes shortcuts faster.

After watching the same failure modes repeat across scaling teams, one pattern stands out: most tooling optimises for reporting, not execution. Dashboards show what happened. They do not surface what is drifting.

DevHawk watches signals across Jira and GitHub that indicate where delivery health is diverging from reported velocity. A ticket that has been sitting in "In Progress" for 48 hours with no associated commits. A PR that has been open past the team's review threshold with no activity. A story that has been reopened three times in the same sprint. A module that keeps appearing in bug tickets and rework cycles across multiple sprints. These are the early signals that debt is actively hurting delivery, and they are almost always invisible in status reports until the damage is done.

When those signals appear, DevHawk triggers a direct follow-up in Slack to the owner of that work, with context. Not a dashboard notification. A specific prompt to the specific person accountable. If the work still does not move, it escalates based on rules the team defines: to a tech lead, a PM, or whoever owns the escalation path for that type of stall.

For PMs accountable for delivery, that loop matters because it closes the gap between "something is drifting" and "someone did something about it" without requiring a status meeting to find it. The debt prioritisation conversation is also sharper when it is grounded in current delivery signals rather than engineering intuition from the last retrospective.

One realism note: DevHawk works best when ownership is clear. If every ticket has a real owner and "done" means something specific, the follow-up loops reinforce good practice. If ownership is ambiguous, automation amplifies the confusion rather than resolving it.

Teams that reduce debt without derailing delivery follow the same sequence. They measure first, expressing debt in delivery terms rather than technical ones. They triage by impact, focusing on what is on the critical path rather than what is most aesthetically offensive to engineers. They allocate continuously, protecting a fixed sprint capacity the same way they protect committed features. And they size the work to sprint boundaries, making debt reduction visible and trackable rather than vague and deferrable.

The teams that do not recover keep treating debt as a project to be scheduled. The cleanup sprint keeps getting deferred. The debt keeps compounding. Velocity keeps drifting. And the planning conversation keeps circling the same question: why can the engineers not just ship features faster?

Technical debt is not an engineering problem waiting for an engineering solution. It is a delivery tax that compounds silently until someone decides to pay it down systematically. The earlier that decision gets made, the cheaper it is.

If your system only works because someone is manually chasing these signals, it is not a system. It is a person.

How do you quantify technical debt for leadership?

Express it in delivery terms, not technical ones. Sprint tax (the percentage of capacity consumed by unplanned rework), velocity drag (a downward trend in sprint output over rolling quarters), and escalating bug rates in stable modules give leadership a clear picture of what debt is costing the roadmap without requiring technical context. The goal is not a perfect measurement. It is a concrete enough signal that debt can compete with features in a prioritisation conversation.

How do you prioritise which technical debt to address first?

Score each area on four dimensions: whether it is on the critical path for near-term roadmap work, its current defect rate, whether it is causing consistent velocity drag, and whether the fix is bounded and estimable. Address the highest-scoring areas first, starting with those that are both high-impact and have a scoped fix cost. Open-ended architectural rewrites that score high on impact but low on boundedness are candidates for the strangler fig approach rather than direct sprint allocation.

How much sprint capacity should go to technical debt?

Teams in good shape typically run at 15 to 20 percent. Teams actively recovering from significant accumulated debt may need to run higher temporarily. The number matters less than the consistency. A protected, continuous allocation that runs every sprint outperforms periodic cleanup sprints in both total debt reduction and delivery stability. The cleanup sprint gets cut. The protected allocation does not.

What is the first signal that technical debt is about to become a delivery crisis?

Not a single failure. A pattern. Estimates creeping upward without scope changing. Bug fixes introducing new bugs. Features that should take days taking weeks. Engineers visibly stretched while velocity metrics look acceptable. When reported throughput and actual delivery effort are moving in opposite directions, the debt has started compounding faster than the team can absorb. That tipping point is almost always visible in team behavior and rising estimates well before it surfaces in any dashboard.

Related reading: Velocity Theater, Technical Debt

CAST, "Coding in the Red: The State of Global Technical Debt 2025." Analysis of more than 10 billion lines of code across 3,000 companies in 17 countries. September 2025.

Stack Overflow, "2024 Developer Survey." Annual survey of 65,000+ developers across 180 countries on tooling, practices, and workplace experience.