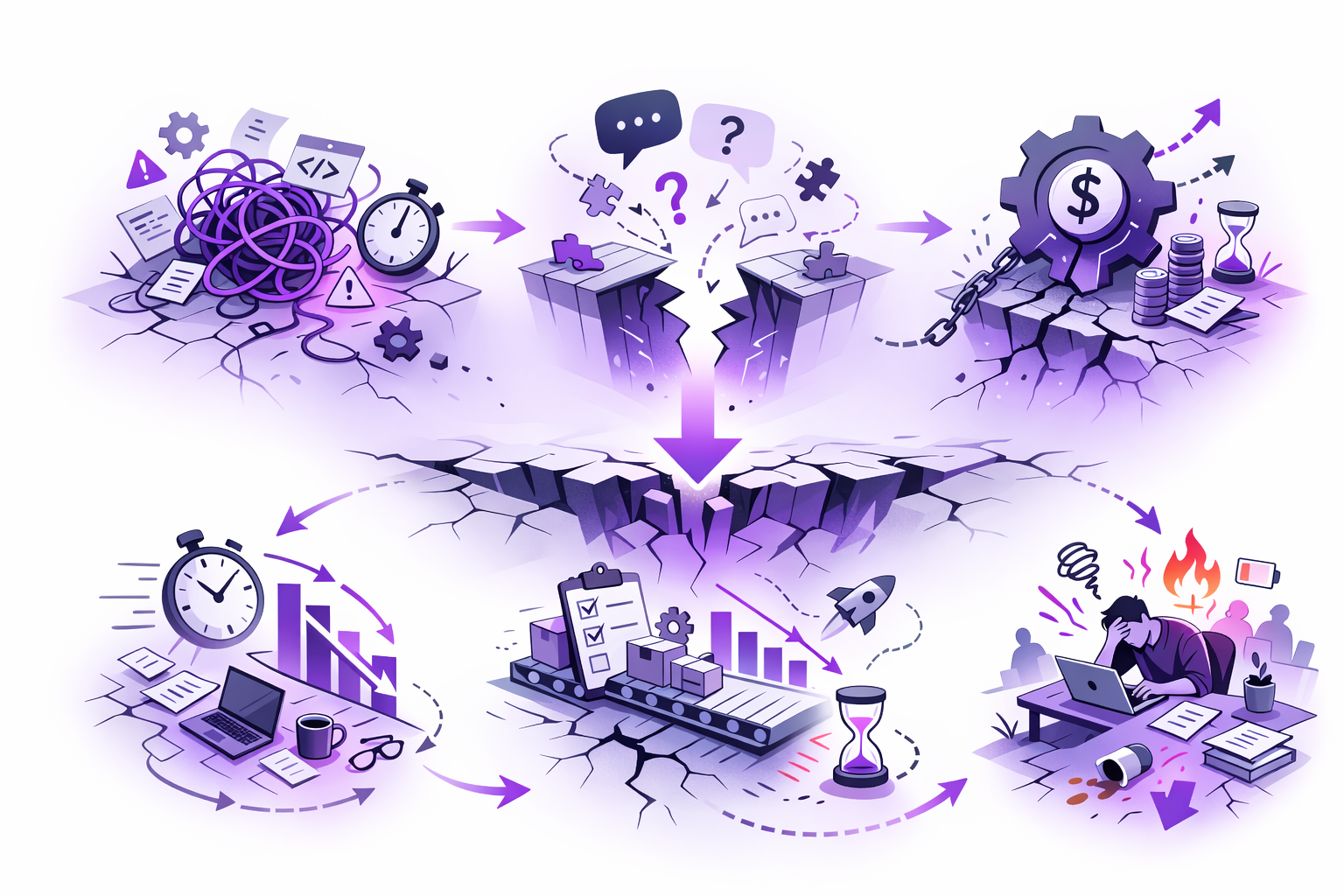

Technical debt isn’t a future problem. It’s already slowing teams down through unreliable estimates, longer reviews, and constant friction that compounds every sprint. The real issue isn’t awareness but communication: teams feel the drag but lack the language and signals to justify action. This gap lets neglect masquerade as “working code,” quietly eroding delivery speed and predictability. The cost is paid daily, not later, in lost time, stalled momentum, and burned-out engineers.

Most engineering leaders know the codebase is degrading. They can feel it in the way estimates drift, in the silence before someone says "it's more complicated than we thought," in the senior engineer who stops raising issues because they've stopped expecting anything to change.

What they struggle to do is say it clearly enough to act on it. "The code quality is slipping" doesn't move budgets. "We need two sprints to refactor" sounds like engineering wanting to rewrite things that already work. So nothing happens. And the cost, which was always present, keeps compounding.

This piece is about that gap: between sensing a problem and having the language and signals to act on it. And what it actually costs when that gap stays open.

Across every team we've worked with, from early-stage startups to mature engineering orgs, the story is the same. Nobody set out to accumulate technical debt. What happened was a thousand individually rational decisions made under pressure, none of which felt significant at the time, all of which are now baked into the system.

Ward Cunningham's original framing of "technical debt" described a deliberate trade-off: ship something that works now, implement it properly later. That can be strategic when used intentionally. But what most teams actually carry isn't strategic debt. It's accumulated neglect. And the interest on that neglect isn't paid at some future date. It's paid in every sprint, by every engineer, every day.

The numbers confirm what anyone managing delivery already feels. Atlassian's 2024 State of Developer Experience Report, conducted with DX and Wakefield Research across more than 2,100 developers and managers globally, found that 69 percent of developers lose eight or more hours per week to inefficiencies, roughly 20 percent of their working time. The leading source of that friction? Technical debt, cited by 59 percent of respondents. For a 500-person engineering organization, Atlassian estimates this translates to approximately $6.9 million in lost productivity annually. Not two sprints. Not a Q3 initiative. A permanent drag on every team, every week.

When you're running delivery, you feel this as a slow erosion of predictability. Estimates get a little less reliable. Onboarding takes a little longer. Review cycles stretch a bit. None of it triggers an alert. And because nothing triggers an alert, nothing triggers urgency. The cost is diffuse, hard to attribute to any single decision, and spread across thousands of small daily frictions.

McKinsey's 2023 research on technical debt puts a sharper number on it: tech debt accounts for roughly 40 percent of IT balance sheets, and some 30 percent of CIOs report that more than 20 percent of their technical budget nominally dedicated to new products is actually diverted to resolving existing debt. Companies in the bottom 20th percentile for tech debt severity are 40 percent more likely to have incomplete or canceled IT modernization efforts than those in the top 20 percent.

These aren't speculative projections. They describe the state of most engineering organizations right now. The teams feeling the slowdown aren't imagining it. They're paying for decisions nobody remembers making.

The deeper problem, and this is one we've watched play out dozens of times, is a translation failure. Engineers experience technical debt as friction: estimates that feel wrong, changes that take longer than they should, systems that require more context to touch than they ought to. But friction is hard to price. And anything that can't be priced doesn't get prioritized.

When a PM or executive asks "how much technical debt do we have," the answer rarely lands. "A lot" doesn't justify delaying a feature. "The auth module is brittle" doesn't explain why velocity dropped 20 percent over four months. "We need time to refactor" sounds like a request to pause delivery, not a diagnosis of what's actually slowing it.

So the conversation stalls. Nothing gets scheduled. And the business defaults to treating working code as good-enough code.

That assumption holds until it doesn't. A two-day estimate becomes two weeks. A deployment breaks something unrelated. A senior engineer quietly starts interviewing elsewhere. By that point, the debt has been compounding for months. The failure feels sudden. It wasn't.

This isn't a new dynamic. Software systems that evolve under real-world constraints get more complex unless you actively counteract it, and perceived quality degrades unless the system is rigorously maintained. Anyone who has worked on a long-lived codebase has seen this firsthand. The drift is structural, not incidental. Small amounts of accumulated debt don't slow teams proportionally. They create compounding friction where every subsequent change is harder than the last.

The system doesn't fail catastrophically. It just gets slower, quietly, until slow becomes the baseline and the baseline stops feeling like a problem worth naming.

This is something we see again and again: every individual PR passes review cleanly. The code works. The tests pass. The logic is sound. But the system is quietly getting harder to change.

The same files keep appearing in bug fixes. Conditional logic accumulates in functions that were supposed to be simple. Five different patterns emerge for the same operation. No single pull request caused this. The trajectory did, and code review, by design, doesn't track trajectory.

If you've managed delivery long enough, you've seen the moment when someone opens a file for what should be a simple change and realizes it's become a minefield. That's the third conditional someone added this quarter to a function already carrying too much weight. That's a dependency introduced months ago that nobody flagged because it passed review. Each decision looked fine in isolation. The trend didn't.

This is the gap between reviewing code and managing quality. Code review catches local issues. Quality management requires tracking what's changing over time: complexity trends, coverage drift, hotspot accumulation, the same files appearing again and again in bug fixes.

Ask any developer what frustrates them most about their day-to-day work, and technical debt will be at or near the top of the list. That frustration isn't coming from any single PR. It's coming from the accumulation of trajectory-level problems that no individual review was designed to catch.

Most teams don't gain visibility into this trajectory until someone goes looking for it. And in our experience, they usually go looking because delivery has already started to suffer.

Not all technical debt is failure. Some of it is strategy. The right move is sometimes to ship something that works today and refactor it later, when the use case is clearer, the team is larger, or the stakes are higher. We've made that call ourselves, and we've helped teams make it deliberately.

The teams we see manage debt well aren't the ones with the cleanest codebases. They're the ones where an engineer can point to a shortcut and say exactly when they plan to come back to it, and the PM knows that plan exists.

What distinguishes intentional debt from neglect is not the decision itself. It's whether the decision was made consciously, with alignment on what you're deferring and when you'll address it. Intentional debt has shared understanding: the team knows what they borrowed against the future, the business understands the trade-off, and there's a shared expectation of when it gets paid down.

Accidental debt has none of that. It emerges from pressure and incomplete information, one pull request at a time, until nobody can trace how the system got this way.

The first kind is manageable. The second kind is entropy. And entropy, left unobserved, compounds.

The teams that manage technical debt effectively share one trait: they measure it continuously, not episodically. Instead of waiting for planning cycles to assess code health, they track leading indicators in real time. Complexity trending up. Test coverage trending down. Review cycle time increasing. Specific modules appearing repeatedly in bug fixes and reopened tickets.

These signals already exist in the systems teams use every day: version control, CI/CD pipelines, code analysis tooling. The difference is whether anyone is treating them as delivery risk or as background noise. In most teams we've worked with, nobody is. The signals are there. They're just not connected to anyone's attention.

The 2024 DORA Accelerate State of DevOps Report, Google's decade-long research program across more than 39,000 professionals, reinforces something we've observed firsthand: the teams that ship reliably aren't the ones working hardest. They're the ones with automated quality gates and continuous monitoring built into their delivery pipeline. They don't rely on manual review alone to catch systemic problems. They build systems that surface drift before it compounds.

One finding from the 2024 DORA report resonated with us in particular. Even AI-generated productivity gains didn't automatically translate into better delivery outcomes. Teams using AI tools tended to produce larger changesets, which DORA's research has long associated with higher risk. The bottleneck wasn't effort. It was the lag between when quality started to degrade and when someone had the information to intervene. That lag is exactly what we've spent years trying to close.

When you can see that lead time on a specific flow has doubled in a month, or that one module appears in nearly half of all recent bug fixes, technical debt stops being abstract. It becomes a measurable constraint on the work you're trying to ship.

Practically, this means three things most teams could implement without new tooling. First, track complexity trends at the file and module level over rolling 30-day windows. Second, flag any file that appears in more than 20 percent of bug-fix commits as a hotspot requiring deliberate attention. Third, treat PR review cycle time as a delivery metric, because when PRs sit for more than three days, you're not just reviewing code. You're accruing delay.

These aren't theoretical recommendations. They're patterns we've seen work across teams of very different sizes and maturity levels. But even when teams know what to track, the harder problem remains: someone still has to watch, follow up, and escalate when things stall. In most organizations, that someone is a human who is already stretched thin.

We built DevHawk because we kept watching the same failure modes repeat: late signals, manual status work, risk discovered too late. Most tools on the market were optimizing for reporting, not execution. Dashboards show what happened. They don't reduce the time between "something is drifting" and "someone did something about it."

DevHawk monitors the signals that exist across your delivery stack, including Jira, GitHub, and Slack, and surfaces patterns that indicate drift before they become commitments missed. When a specific module keeps appearing in reopened tickets, when PRs in a particular area are aging past review thresholds, when work sits in "In Progress" with no commits for 48 hours, DevHawk identifies it and triggers a follow-up to the right owner. If it still doesn't move, it escalates based on rules your team defines.

If you're managing distributed delivery across time zones, you know where risk compounds: in the lag between signal and action. A blocker shows up at 4pm Pacific, the owner is asleep in Europe, and by the time anyone notices, a day is gone. DevHawk exists to reduce that latency, not by replacing judgment, but by ensuring the right person knows what needs attention before a standup surfaces it 24 hours too late.

One practical note: DevHawk works best when ownership is clear. If tickets don't have real owners, or if "done" means different things across the team, automation amplifies the confusion rather than resolving it. We learned this the hard way. The prerequisite for any follow-up loop is knowing who is accountable for what.

Technical debt is not an engineering problem, and it is not a future problem. It is a delivery problem, and it is a present one. Every week that systemic quality issues stay invisible is a week they are being paid for, in slower estimates, longer onboarding, more fragile deployments, and engineer attention spent on workarounds instead of product work.

The teams that navigate this well are not the ones who eliminate debt. That is not a realistic goal for any team shipping continuously under real constraints. They are the ones who build feedback loops that surface quality issues before they degrade velocity, so the decision becomes whether to address a small problem now or pay for a larger one later.

That decision, made repeatedly, with good information, is what separates teams that stay fast from teams that wonder why everything is taking longer.

How do you measure technical debt in a meaningful way?

Most teams treat technical debt as a feeling rather than a metric. The most practical approach is to track leading indicators continuously: complexity trends at the file or module level, test coverage drift over rolling 30-day windows, PR review cycle times, and hotspot files that appear repeatedly in bug-fix commits. If a file shows up in more than 20 percent of bug fixes, it warrants deliberate attention. These signals already exist in version control and CI/CD pipelines. The difference is whether your team treats them as delivery risk or background noise.

What is the difference between intentional technical debt and accidental technical debt?

Intentional technical debt is a conscious decision: you ship something that works now with a shared understanding of what you are deferring and when you will address it. The team knows the trade-off, and the business has agreed to it. Accidental technical debt is the opposite. It accumulates from pressure and incomplete information, one pull request at a time, until nobody can trace how the system got to its current state. The first kind is manageable. The second kind compounds quietly and is far more expensive to address because no one planned for it.

Why is technical debt so hard to communicate to non-technical stakeholders?

Engineers experience technical debt as friction: estimates that feel wrong, changes that take longer than they should, systems that require excessive context to modify safely. But friction is difficult to price. When a PM or executive asks "how much technical debt do we have," the answer rarely translates into something actionable. The key is connecting debt to delivery outcomes: longer lead times, less reliable estimates, increased rework, and slower onboarding. When debt is framed as a measurable constraint on shipping speed rather than an abstract code quality issue, it becomes easier to prioritize.

Sources cited: